The American doctoral landscape is currently navigating its most significant transformation since the advent of the internet. As Artificial Intelligence (AI) matures from a speculative tool to a core component of the research infrastructure, the standards for what constitutes a “high-quality” dissertation are shifting. In Tier-1 research universities across the United States—from the Ivy League to major state systems—faculty senates and graduate divisions are rewriting the rulebooks to balance technological efficiency with academic integrity.

This evolution is not merely about using chatbots to brainstorm titles; it is about a fundamental shift in how data is synthesized, how literature is mapped, and how the “original contribution to knowledge” is defined in an era of machine-augmented intelligence.

The Paradigm Shift: From Search Engines to Synthesis Engines

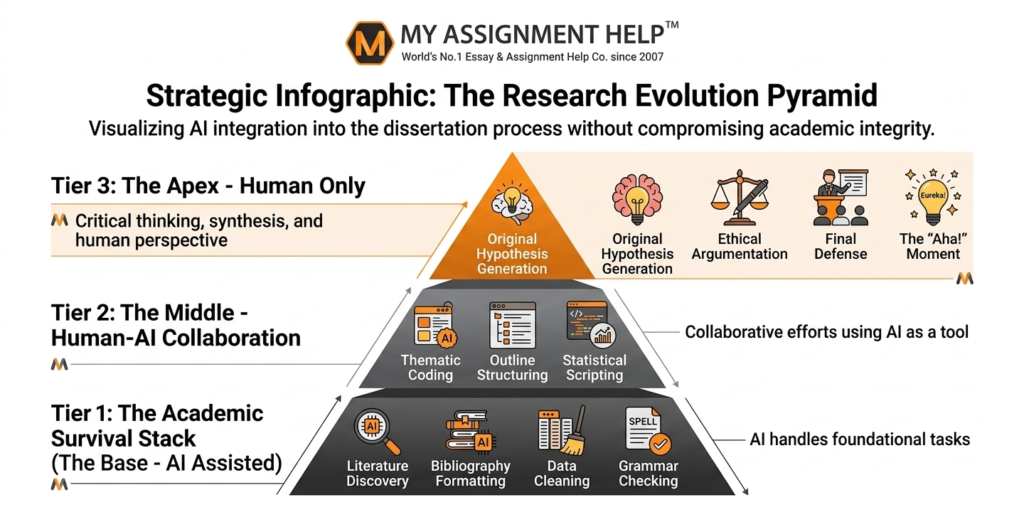

Traditionally, the first two years of a PhD program in the US were dedicated to the “grunt work” of research: manual bibliography building and exhaustive literature coding. AI tools like Elicit, Research Rabbit, and Consensus have compressed these timelines. These platforms allow researchers to map citation networks and identify research gaps in seconds—a process that previously took months of library residency.

However, this efficiency comes with a new set of expectations. Because the mechanical aspects of research are now faster, doctoral committees are raising the bar for critical analysis. It is no longer enough to summarize existing literature; candidates are expected to provide deeper cross-disciplinary syntheses that AI cannot yet replicate. For students navigating these heightened expectations, seeking professional help with dissertation writing has become a strategic move to ensure their work meets the rigorous E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) standards required by modern American universities.

Data Analysis and the Rise of “Computational Originality”

In STEM and Social Science departments, the definition of “original data” is being challenged. Generative AI and Large Language Models (LLMs) are being used to clean massive datasets, write Python scripts for statistical modeling, and even simulate certain experimental environments.

- Automated Coding: In qualitative research, AI tools can now perform initial thematic coding on hundreds of interview transcripts, allowing the researcher to focus on high-level theory building.

- Predictive Modeling: In fields like epidemiology or urban planning, AI-driven simulations are becoming standard for validating hypotheses before physical data collection begins.

- Language Refinement: For the international student community in the US, AI has leveled the playing field by assisting with grammatical precision, though the “human voice” remains the gold standard for defense.

While these tools are powerful, they require a new form of literacy. Students are now being evaluated on their “Prompt Engineering” for research and their ability to audit AI outputs for “hallucinations”—a term used when AI generates false citations or data.

The Ethical Frontier: Plagiarism 3.0 and Disclosure

The Council of Graduate Schools (CGS) has recently highlighted the need for clear institutional policies regarding AI. The US academic standard is moving toward a “Disclosure Model.”

- AI as a Co-Pilot, Not an Author: Most US universities now require an “AI Disclosure Statement” in the dissertation’s methodology or acknowledgments section.

- The Hallucination Risk: Academic integrity is no longer just about avoiding plagiarism; it is about verifying truth. A dissertation that cites non-existent AI-generated sources is now considered a major ethical breach, often resulting in immediate disqualification.

- The Global Standard: While this blog focuses on the US, the trend is global. Students often look for online assignment help to understand how these standards vary between the US and international regions like the UK, ensuring their work remains compliant with specific jurisdictional norms.

Addressing E-E-A-T: Why Human Expertise Still Rules

Google’s E-E-A-T guidelines are increasingly relevant to academic publishing. Search engines and academic repositories alike are prioritizing content that shows “Experience” and “Expertise.” AI can simulate knowledge, but it cannot simulate the experience of conducting a four-year longitudinal study or the authoritativeness of a scholar who has defended their work before a committee of experts.

The modern dissertation must demonstrate a “Human-in-the-loop” signature. This means the prose should reflect personal voice, nuanced understanding of cultural contexts, and a level of skepticism toward automated results.

Key Takeaways

- Efficiency vs. Depth: AI reduces the time spent on manual labor, but universities are responding by requiring deeper analytical rigor.

- Mandatory Disclosure: Transparently documenting the use of AI tools is becoming a standard requirement for dissertation submission in the US.

- Validation is Key: The primary role of the PhD candidate is shifting from “Information Gatherer” to “Information Verifier.”

- E-E-A-T Matters: High-quality research must emphasize the human element—originality and ethical oversight—to pass modern plagiarism and AI detection filters.

See also: Crypto in Supply Chain Management

FAQ: Navigating AI in US Dissertations

Q1: Is using AI to write my dissertation considered plagiarism?

In most US institutions, using AI to generate text and passing it off as your own is considered “AI-giarism.” However, using AI for brainstorming, editing, or data analysis is often permitted, provided it is disclosed.

Q2: Can AI-generated citations be trusted?

No. LLMs are prone to “hallucinations” where they create plausible-sounding but entirely fake academic references. Every citation must be manually verified by the researcher.

Q3: How do US universities detect AI-written content?

Universities use advanced detection tools like Turnitin’s AI writing indicator and GPTZero. More importantly, doctoral committees look for shifts in “voice” and the absence of critical, nuanced argumentation that characterizes human doctoral-level writing.

Q4: Should I mention AI in my Methodology chapter?

Yes. If AI was used for data processing, coding, or translation, it should be explicitly mentioned in your methodology to maintain transparency and authoritativeness.

References & Data Sources

- Council of Graduate Schools (2024): The Impact of Generative AI on Graduate Education. [CGS Reports]

- Modern Language Association (MLA) & APA (2023): Joint Statement on AI-Generated Content and Citation Standards.

- US Department of Education (2023): Artificial Intelligence and the Future of Teaching and Learning.

- Nature Journal (2023): The Role of AI in Scientific Writing: Ethics and Transparency.

Author Bio: Dr. Sarah Jenkins

Dr. Sarah Jenkins is a Senior Academic Consultant at MyAssignmentHelp. With a PhD in Educational Technology from a leading US university and over 12 years of experience in content strategy and academic research, she specializes in helping doctoral candidates navigate the intersection of technology and traditional scholarship. Dr. Jenkins is a frequent contributor to discussions on E-E-A-T in academic publishing and has overseen the development of research frameworks for students across the US, UK, and Australia.